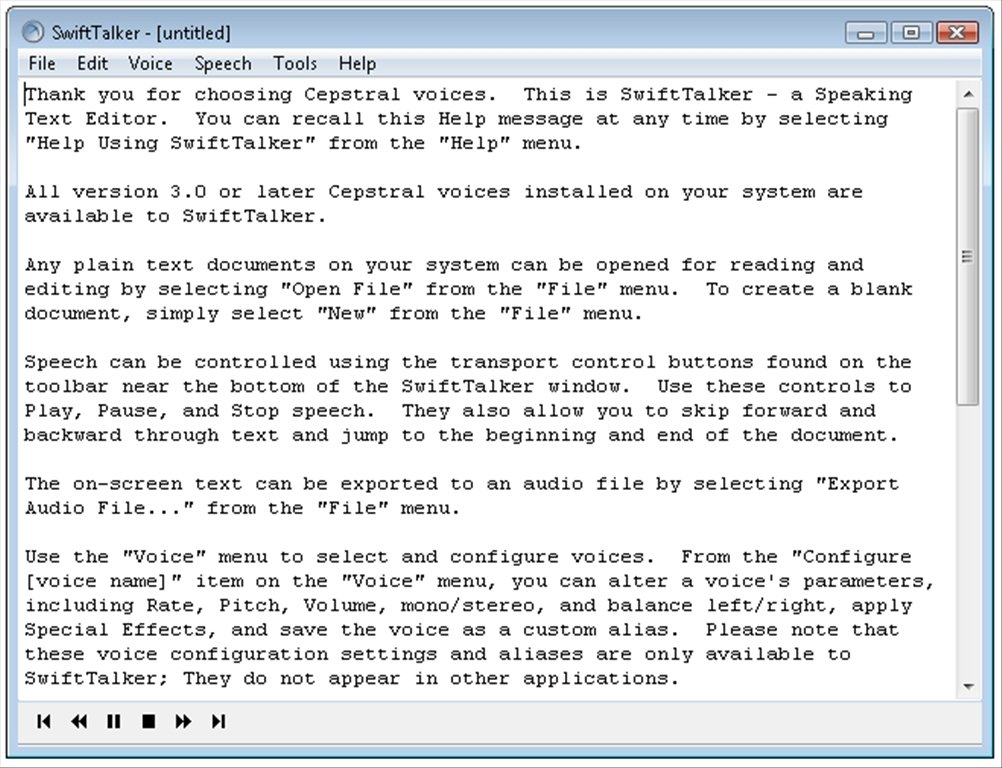

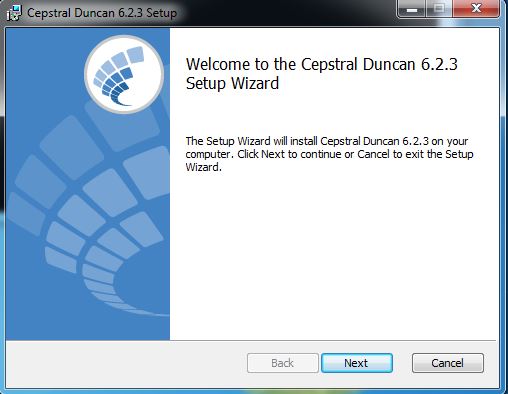

Cepstral voices 6.2 serials license key#Tips are not really compatible between 16 and 8kHz sounds, so please make certain you down loaded the correct tone of voice, and bought the correct license key for it. The 16kHz Allison tone of voice is known as 'Allison' whereas 8kHz Allison can be called 'Allison-8kHz', and so forth for the various other voices. The words l and O never appear in the essential (It's a hex entity, therefore it'h constantly the quantities 0 and 1). For all platforms: Details will be all case sensitive, punctuation sensitive. On the Mac: make use of the Cepstral Voices Choice Pane. Operate swift -reg-voice and enter the license info. On Iinux and unix variations: customers need to make use of the speedy command line (which can be also available on Home windows and Mac). Cepstral voices 6.2 serials windows 7#Windows XP needs that you become a member of the Owner group, but Windows vista and Windows 7 require that you further 'Run as manager.' There will be a problem in the Vista and Windows 7: when Cepstral tools is not really invoked making use of 'Run As Supervisor.', both working systems fail quietly to create the license document, which will cause the tone of voice to go back mysteriously at some afterwards to being unlicensed. Make sure it is usually at the suitable privilege degree. On Windows: make use of the handle section ->Cepstral Equipment panel. Please be aware that the tip does not really pay attention to the speech configurations (it earned't speed up or stop down, for example). As soon as the consumer gets the e-mail with the voice essential and enters the license as referred to below, the tip is taken out when the tone of voice speaks. The unlicensed edition is just various in that it talks the license reminder. There will be only unlicensed, after that subsequently certified voices. Pablo, As of edition 5.1 of the Cepstral sounds, there will be no such point as a demo edition of a tone of voice. Sharing is certainly qualified and that will be the only way to maintain our picture, our local community alive. Cepstral voices 6.2 serials serial numbers#You should think about to distribute your own serial numbers or talk about other documents with the area just as someone else assisted you with CepstraI Swifttalker with Márta 3.2.1 serial amount. Final but not less important is usually your very own share to our lead to. Our releases are to verify that we can! Nothing can quit us, we keep combating for independence despite all the troubles we encounter each time. Cepstral voices 6.2 serials software#If you are usually maintaining the software program and want to make use of it more time than its demo period, we highly motivate you buying the license essential from Cepstral recognized web site. This should be your intention as well, as a user, to completely evaluate Cepstral SwifttaIker with Marta 3.2.1 without restrictions and then decide. Our intentions are not really to damage Cepstral software firm but to give the possibility to those who can not spend for any piece of software program out there. Calculating the KLD state-mapping on only the first 10 mel-cepstral coefficients leads to huge savings in computational costs, without any. Cepstral voices 6.2 serials full#Calculating the KLD state-mapping on only the first 10 mel-cepstral coefficients leads to huge savings in computational costs, without any detrimental effect on the quality of the synthetic speech.This release was created for you, eager to make use of Cepstral Swifttalker with Marta 3.2.1 full and with without limitations. Listening tests demonstrate that adapted voices sound more similar to a target speaker than average voices and that differences between supervised and unsupervised cross-lingual speaker adaptation are small. Listening tests demonstrate that adapted voices sound more similar to a target speaker than average voices and that differences between supervised and unsupervised cross-lingual speaker adaptation are small. In this paper, the English-to-Japanese adaptation is evaluated.

End-to-end speech-to-speech translation systems for four languages (English, Finnish, Mandarin, and Japanese) were constructed within this framework. Thus, an unsupervised cross-lingual speaker adaptation system was developed.

The CLSA is based on a state-level transform mapping learned using minimum Kullback–Leibler divergence between pairs of HMM states in the input and output languages. We integrated two techniques into a single architecture: unsupervised adaptation for HMM-based TTS using word-based large-vocabulary continuous speech recognition, and cross-lingual speaker adaptation (CLSA) for HMM-based TTS. In the EMIME project, we developed a mobile device that performs personalized speech-to-speech translation such that a user’s spoken input in one language is used to produce spoken output in another language, while continuing to sound like the user’s voice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed